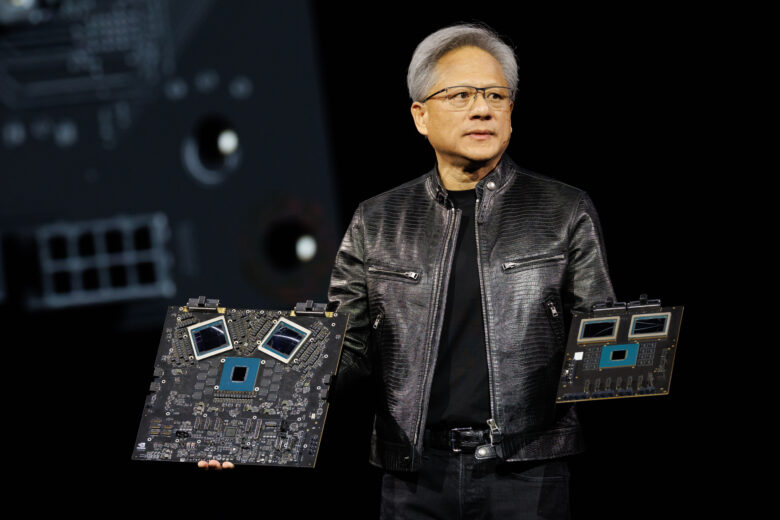

Nvidia Bets $26 Billion on Open-Source AI to Build a New Moat Next to CUDA

Despite all concerns about an AI bubble, with a valuation of 4.45 trillion dollars, it remains the world’s most valuable company. Previously known primarily for hardware (Nvidia chips power GenAI), Nvidia now apparently wants to enter the business of AI models themselves on a large scale.

The chip manufacturer plans to invest 26 billion dollars over the next five years in the development of open-weight AI models. This emerges from a 2025 financial filing and has been confirmed by company representatives. Now, with the release of Nemotron 3 Super, a 120-billion-parameter model specifically optimized for autonomous AI agents, the first step in this direction follows.

Nemotron 3 Super: Technical Innovation for AI Agents

The newly released Nemotron 3 Super combines three architectural components: Mamba-2 state-space layers for efficient processing of long token sequences, Transformer attention layers for precise retrieval, and a novel “Latent MoE” design. The model uses 12 billion active parameters out of a total of 120 billion and features a context window of one million tokens.

A special feature is native training in NVFP4, Nvidia’s 4-bit floating-point format. Unlike post-hoc compression, the model learned from the beginning to work precisely with 4-bit arithmetic. Compared to predecessor models, Nemotron 3 Super delivers more than five times higher throughput and is, according to Nvidia, 2.2 times faster than OpenAI’s GPT-OSS 120B and 7.5 times faster than Alibaba’s Qwen3.5-122B.

The complete training pipeline is publicly accessible: weights on Hugging Face, 10 trillion curated pretraining tokens across a total of 25 trillion during training, 40 million post-training samples, and reinforcement learning recipes. Companies such as Perplexity, Palantir, Cadence, and Siemens are already integrating the model into their workflows, it is said.

26 Billion Investment: The Largest Open-Source AI Bet in History

The announced investment of 26 billion dollars far exceeds previous AI development budgets. For comparison: OpenAI is said to have spent about 3 billion dollars on training GPT-4. This means: there is no other company investing that much money in open-source AI.

Bryan Catanzaro, Vice President of Applied Deep Learning Research at Nvidia, confirmed to Wired that the company recently completed pretraining of a 550-billion-parameter model. According to the financial filing, the funds are to flow into model development, computing infrastructure, research talent, and “ecosystem development,” which likely includes partnerships and possibly acquisitions.

Jack Dorsey, who recently laid off 40 percent of Block’s workforce for AI initiatives, called the investment “excellent.”

Strategic Motivation: Defending the CUDA Ecosystem

Nvidia’s market position is based, by the way, less on hardware than on CUDA, the software ecosystem the company has built over nearly 20 years. CUDA has over 4 million developers, more than 3,000 optimized applications, and is deeply integrated into all major AI frameworks. Universities teach CUDA, research papers are based on CUDA.

However, this dominance is coming under pressure. According to benchmarks, AMD’s MI355X delivers 30 percent faster inference than Nvidia’s B200 at approximately 40 percent better tokens per dollar. AMD’s ROCm 7.0 offers native support for PyTorch and JAX. OpenAI’s Triton compiler enables teams to run models on AMD and Intel hardware without rewriting code.

An analysis by Built In from January 2026 argued that CUDA’s dominance has reached a turning point as hardware-agnostic compilers gain importance. Nvidia currently holds 86 percent of data center GPU revenue in 2026, compared to about 90 percent in 2024.

The Android Strategy with a Decisive Difference

The strategy resembles Google’s approach with Android: models are made openly available so developers can build on them, and each model is optimized for Nvidia hardware, where actual revenue is generated. Google made Android open source to ensure that Google Search became the default search engine on every smartphone. Nvidia makes models open so that every developer builds on them and each model is optimized for Nvidia hardware.

The decisive difference: Google did not sell hardware to Samsung and HTC. Nvidia sells chips to Microsoft, Amazon, Google, and Meta, the four hyperscalers that are simultaneously its largest customers and most motivated competitors. All four are developing their own chips to reduce Nvidia dependence: Microsoft’s Azure, Amazon’s Trainium, Google’s TPUs, and Meta’s MTIA chips.

Risks of the Strategy

The investment carries a substantial risk: if Nvidia begins competing with its largest customers at the model level, the incentive for them to accelerate their own chip programs increases. Hyperscalers want to invest 660 billion dollars in AI infrastructure in 2026. If even a fraction of these expenditures are redirected from Nvidia GPUs to proprietary chips because Nvidia is now a competitor, the financial impact far exceeds the 26 billion dollar investment.

Nvidia’s defense lies in its annual product cycle. Blackwell was shipped in 2025, Vera Rubin follows in the third quarter of 2026 with HBM4 support, Rubin Ultra in the second half of 2027. Jensen Huang calls himself “Chief Revenue Destroyer” because each generation intentionally makes the previous one obsolete.

The bet is that Nvidia can maintain its hardware lead far enough that even motivated competitors cannot close the gap in time, while the open-model strategy reinforces software dependence. Whether this bet pays off depends on execution. Nvidia spent 20 years building the CUDA moat. Now the company has five years and 26 billion dollars to build the next one.